A recent emphasis on sociomateriality appears to have entered the IS literature because of discussions by Orlikowski (2010) and the excellent empirical study of Volkoff et al. (2007). Now that people have been sensitized to the literature on material practice, actor-network theory is classified as “tired and uninformative” [1]. Which leads me to wonder just how many IS academics have actually read the actor-network theorists? Or pondered how this applies to technology design?

Long before people started discussing socio-material “assemblages,” Bruno Latour (1987)and John Law (1987) were discussing how technology developed by means of “heterogeneous networks” of material and human actants, the combination of which directs the trajectory of technology design and form. Latour (1999) suggests that he should recall the term “actor-network,” as this is too easily confused with the world-wide web. Yet actor-networking – in the sense of a web of connectivity, where heterogeneous interactions between diverse individuals, between virtually-mediated groups, and between individuals and material forms of embedded intentionality – is exactly what is going on in today’s organizations.

In addition, Michel Callon’s (1986) work on how the “problematization” of a situation in ways that aligns the interests of others leads to their enrolment in a network of support for a specific technological frame. Once support has been enrolled, such networks endow irreversibility, which makes changes to the accepted form of a technology solution incredibly difficult. So we have paradigms that are embedded in a specific design. Akrich coined the term “script” to define the performativity of technology and the term was adopted by the other leading actor-network theorists [2]. This thread of work articulates incredibly deeply the ways in which technology design directs its users (and maintainers) into a set of roles and worldviews that are difficult to escape. We must “de-script” technology to repurpose it to other networks and other applications – which is much more difficult than one would suppose, given the embedded social worlds that are carried across networks of practice with the use of common technologies (Akrich 1992).

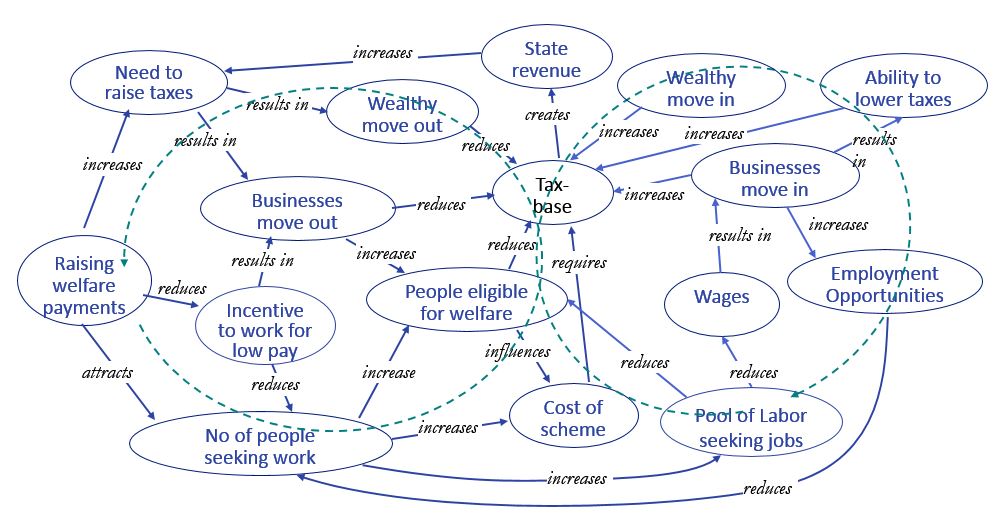

So what does actor-network theory give us? It provides a conceptual and practical approach to understanding and modeling why design takes specific forms – and what needs to be “undone” for a design to be conceived differently than in the past [3]. It provides a rationale for understanding technology as a network actor in its own right, influencing behavior and constraining discovery. The assumptional frameworks for action embedded in – for example – a software book-pricing application will direct the evaluation of price alternatives in ways that reflects the model of decision-making adopted by the software’s author. This results in the type of stupid automaticity that recently saw an Amazon book priced at $23,698,655.93 (plus $3.99 shipping). The cause of this pricing glitch was traced back to an actor-network of two competing sellers, unknowingly connected via their use of the same automated pricing software [4].

Finally, I want to observe that a lot of the recent “materiality of practice” literature has identified new phenomena and new mechanisms of actor-networks. For example Knorr Cetina (1999) has sensitized us to how epistemology is embedded in socio-technical assemblages, Rheinberger (1997) has demonstrated how some technical objects are associated with emergence while others enforce standardization and Henderson (1999) demonstrates how the use of specific representations can conscript others around an organizational power-base. But I would argue that these effects can be understood by using Actor-Network Theory as one’s underpinning epistemology – and that exploring actor-network interactions continues to reveal ever newer mechanisms that are relevant to how we work today. I would strongly recommend Bruno Latour’s latest book, Reassembling The Social.

Notes:

[1] I have to declare an interest here – this comment was contained in a review of one of my papers … 🙂

[2] As Latour (1992) argues: “Following Madeleine Akrich’s lead (Akrich 1992), we will speak only in terms of scripts or scenes or scenarios … played by human or nonhuman actants, which may be either figurative or nonfigurative.”

[3] One of my favorite papers on the topic of irreversibility in design is ‘How The Refrigerator Got Its Hum,’ by Ruth Cowan (1995). Another good read is the introduction to the same book by MacKenzie and Wajcman (1999).

[4] The amusing outcome is recounted by Michael Eisen, at http://www.michaeleisen.org/blog/?p=358

References:

Akrich, M. 1992. The De-Scription Of Technical Objects. W.E. Bijker, J. Law, eds. Shaping Technology/Building Society: Studies In Sociotechnical Change. MIT Press, Cambridge, MA, 205-224.

Callon, M. 1986. “Some elements of a sociology of translation: domestication of the scallops and the fishermen of St Brieuc Bay.” J. Law, ed. Power, Action, and Belief: a New Sociology of Knowledge? Socioogical Review Monograph 32. Routledge and Kegan Paul, London, 196-233.

Cowan, R.S. 1995. “How the Refrigerator Got its Hum.” D. Mackenzie, J. Wajcman, eds. The Social Shaping of Technology. Open University Press, Buckingham UK, 281-300.

Henderson, K. 1999. On Line and on Paper: Visual Representations, Visual Culture,and Computer Graphics in Design Engineering. MIT Press, Harvard MA.

Knorr Cetina, K.D. 1999. Epistemic Cultures: How the Sciences Make Knowledge. Harvard Univ. Press, Cambridge, MA.

Latour, B. 1987. Science in Action. Harvard University Press, Cambridge MA.

Latour, B. 1992. “Where Are the Missing Masses? The Sociology of a Few Mundane Artifacts.” W.E. Bijker, J. Law, eds. Shaping Technology/Building Society: Studies In Sociotechnical Change. MIT Press, Cambridge MA.

Latour, B. 1999. “On Recalling ANT.” J. Law, J. Hassard, eds. Actor Network and After. Blackwell, Oxford, UK 15-25.

Law, J. 1987. “Technology and Heterogeneous Engineering – The Case Of Portugese Expansion.” W.E. Bijker, T.P. Hughes, T.J. Pinch, eds. The Social Construction of Technological Systems: New Directions in the Sociology and History of Technology. MIT Press, Cambridge MA.

MacKenzie, D.A., J. Wajcman. 1999. Introductory Essay. D.A. Mackenzie, J. Wajcman, eds. The Social Shaping Of Technology, 2nd. ed. Open University Press, Milton Keynes UK, 3-27.

Orlikowski, W. 2010. “The sociomateriality of organisational life: considering technology in management research.” Cambridge Journal of Economics 34(1) 125-141.

Rheinberger, H.-J. 1997. Experimental Systems and Epistemic Things Toward a History of Epistemic Things: Synthesizing Proteins in the Test Tube. Stanford University Press, Stanford CA, 24-37.

Volkoff, O., D.M. Strong, M.B. Elmes. 2007. “Technological Embeddedness and Organizational Change.” Organization Science 18(5) 832-848.